Search is no longer the only way people find brands. A fast-growing segment of your audience is typing questions into ChatGPT, Claude, Perplexity, and Gemini and getting complete answers back without ever visiting a search results page. Those answers come with citations. If your brand is in those citations, you exist to that user. If you are not, you are invisible to them, regardless of how well you rank on Google.

This guide covers everything: what LLM visibility actually is, how it differs from SEO, how AI systems decide which sources to cite, how to optimize for it, how to measure it, and what the future looks like. This is the core of what the LLM Visibility Lab exists to help you understand and act on.

What LLM visibility actually means

LLM visibility is the measure of how often and how favourably your brand, content, or website appears inside responses generated by large language models. When someone asks ChatGPT to recommend the best SEO tools, or asks Claude to explain how content clusters work, or asks Perplexity for a comparison of AI visibility platforms, the sources cited in those answers represent LLM visibility for the brands and pages included.

It is not a ranking. There is no position one or position two in the way traditional search works. Instead, your brand either appears in the AI's answer or it does not. You are either cited or you are skipped. And unlike traditional search, where appearing on page two still gives you some theoretical chance of a click, not being cited by an LLM means you are completely absent from that user's consideration set at that moment.

The term covers several related but distinct forms of appearance. An LLM might mention your brand by name in a recommendation. It might cite one of your pages as a source for a specific claim. It might describe your product in a comparison. It might reference your research data. Each of these counts as LLM visibility, and each has a different optimization path.

How LLM visibility differs from traditional search engine visibility

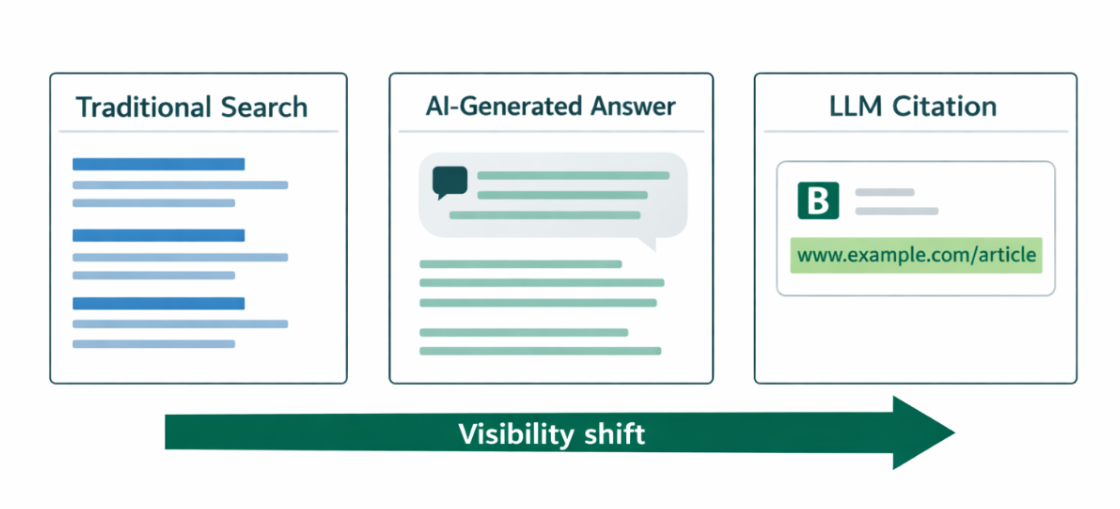

Traditional search engine visibility is about ranking. The higher you appear in Google's results for a given keyword, the more clicks you receive. SEOs measure this through keyword rankings, organic traffic, and click-through rates. The entire model is built around a list of links that users browse and choose from.

LLM visibility operates on a completely different logic. Large language models do not present a list of options. They synthesize an answer and select sources to support it. The user sees one response, not ten links. This creates a winner-takes-most dynamic where the sources cited in the AI's answer capture the user's attention entirely, and everything else is invisible.

Dimension | Search engine visibility | LLM visibility |

Output format | Ranked list of links | Synthesized answer with citations |

User action required | User browses and selects | AI selects a user to read the answer |

Volume of sources shown | 10+ results per page | Typically, 3–6 cited sources |

Key metric | Rankings, CTR, organic traffic | Citation rate, brand mention frequency |

Primary signal | Backlinks and on-page SEO | Entity strength, content clarity, brand mentions |

Click-through behavior | Most traffic comes from clicks | Most users don't click, but those who do convert at high rates |

Optimization focus | Keywords, authority, technical SEO | Answer-first writing, topic clusters, off-site mentions |

Importantly, the two are not in opposition. Research by Ahrefs across 400+ high-intent keywords found that page-one Google rankings correlate strongly with LLM citations. Clients ranking on page one were cited by ChatGPT 67% of the time and by Perplexity 77% of the time. SEO remains your foundation. LLM visibility is the layer above it that determines whether your content gets selected when AI synthesizes an answer.

Why LLM visibility matters for your brand right now

The scale of AI-assisted search is no longer experimental. ChatGPT reached 800 million weekly active users by late 2025, double its user count from just nine months earlier. Perplexity processes 780 million queries per month. Google AI Overviews now appear on more than a quarter of all searches. These are not niche use cases. They are mainstream discovery channels.

What makes LLM visibility strategically urgent is not volume; it is quality. LLM-referred users arrive with context. They have already received a synthesized answer and are visiting your site for verification, deeper information, or to take action. LLM referral traffic converts at significantly higher rates than traditional organic search across studies tracking this channel. Low volume with high intent often outperforms high volume with low intent in actual business outcomes.

There is also a compounding effect that most brands are not yet accounting for. Brands that optimize now by cleaning entity data, earning authoritative citations, and tracking AI presence will own the narrative as AI platforms mature. Like early SEO adopters who built domain authority when the field was nascent, brands building LLM visibility today are establishing a citation footprint that becomes increasingly difficult for competitors to displace.

How LLMs decide which sources to cite

Understanding citation selection is the foundation of LLM visibility optimization. Large language models do not randomly select sources. Their citation behavior is driven by specific, measurable signals, some familiar from traditional SEO, some entirely new.

Parametric knowledge vs. retrieved knowledge

LLMs draw from two fundamentally different knowledge sources. Parametric knowledge is everything the model learned during training, static and fixed at the training cutoff date, and retrieved from memory in milliseconds. Retrieved knowledge is sourced in real time through retrieval-augmented generation (RAG), where the model searches the web, evaluates current documents, and incorporates that information into its response.

For LLM visibility purposes, retrieved knowledge is where the optimization opportunity lies. 60% of ChatGPT queries are answered from parametric knowledge alone, but the remaining 40%, which covers time-sensitive topics, current recommendations, recent data, and anything requiring up-to-date sourcing, relies on RAG retrieval. These are the queries where structured, fresh, well-cited content has the greatest advantage.

The signals that drive citation selection

Across major studies analyzing hundreds of millions of LLM citations, a consistent set of signals emerges as the primary drivers of whether a page gets cited:

Citation signal | What it means | Impact on visibility |

Brand search volume | How often do users search for your brand on Google | The strongest predictor of LLM citations (0.334 correlation) is stronger than backlinks |

Off-site brand mentions | How frequently your brand is mentioned across the web in a relevant context | Brands in the top 25% for web mentions earn 10x more AI citations than the next quartile |

Domain authority and backlinks | Quality and quantity of sites linking to you | Sites with 32K+ referring domains are 3.5x more likely to be cited by ChatGPT |

Content freshness | How recently pages were published or updated | 65% of AI bot traffic targets content updated within the last year |

Page load speed | How quickly does your page load for crawlers | Pages with FCP under 0.4s average 6.7 citations; pages over 1.13s average just 2.1 |

Answer-first structure | Whether the page leads with a direct answer | 44.2% of all LLM citations come from the first 30% of a page's text |

Original data and statistics | Whether the page contains unique research or data | Adding statistics increases AI visibility by 22%; adding quotations increases it by 37% |

LLM visibility vs SEO vs AEO, understanding where each fits

These three concepts are related but distinct, and confusing them leads to a misaligned strategy. Understanding how they differ and how they overlap is essential for building a visibility plan that actually works across all three.

| SEO | AEO | LLM visibility |

What it optimizes for | Google rankings and organic clicks | Featured Snippets and Google AI Overviews | Citations in ChatGPT, Claude, Perplexity, Gemini |

Primary platform | Google (and Bing) | Google AI Overviews, Answer boxes | All major LLMs across platforms |

Output measured | Keyword rankings, organic traffic | AIO appearances, featured snippet capture | Citation rate, brand mention frequency |

Core technique | On-page SEO, link building, and technical SEO | Answer-first writing, structured data, FAQs | Entity building, off-site mentions, and content structure |

Relationship to others | Foundation for both AEO and LLM visibility | Middle layer — feeds into LLM visibility | Builds on SEO and AEO foundation |

Think of it as a stack. Strong SEO builds the foundation. Answer engine optimization (AEO) captures the Google AI Overview layer. LLM visibility extends that presence across all major AI platforms. Each layer builds on the one beneath it. A brand that invests in SEO without thinking about LLM visibility is leaving the top of the stack empty.

How to optimize for LLM visibility: a step-by-step framework

LLM visibility optimization is not a single tactic. It is a system of interconnected actions that reinforce each other over time. The six steps below should be implemented in order: technical access first, then content, then off-site presence, then measurement.

Step 1: Ensure AI crawlers can access your content

No optimization effort matters if AI crawlers cannot reach your pages. This is the most commonly overlooked starting point. Many sites accidentally block AI crawlers through broad disallow rules in robots.txt that were added to block training scrapers, but inadvertently block search and retrieval bots as well.

A publisher who wants citation visibility but not training data collection should allow search and retrieval bots while blocking training scrapers. Confirm also that your important pages are server-side rendered, most AI crawlers do not execute JavaScript, meaning JavaScript-only content is invisible to them.

Step 2: Build off-site brand mentions systematically

Off-site brand mentions are the single strongest predictor of LLM citation frequency. This finding appears consistently across major studies. LLMs understand your brand based on how it is described and referenced across their training data and real-time retrieval sources. The more often your brand is mentioned in relevant context across authoritative platforms, the stronger the entity signal your brand carries.

The platforms that matter most for LLM citation are user-generated content sites (Reddit, Quora), third-party review platforms (G2, Trustpilot, Capterra), YouTube, Wikipedia (where applicable), and high-authority industry publications.

Step 3: Structure content for AI extraction

AI systems extract meaning at the section level, not the page level. Each section of your page competes independently to be cited. Sections that open with a direct answer, use a clear heading that mirrors a real user question, and contain verifiable data or specific claims are far more likely to be extracted and cited than sections that build context slowly or stay vague.

The answer-first writing model applies across every section: lead with the direct response, follow with explanation, then add supporting depth. Use question-based H2 and H3 headings. Keep paragraphs short. Include FAQ sections to capture follow-up query extraction. Build topic clusters with strong internal linking so AI systems can contextualize your authority across related pages rather than evaluating each page in isolation.

Step 4: Strengthen entity signals and E-E-A-T

LLMs understand your brand through entity relationships. The words and concepts that consistently appear near your brand name across the web form the entity co-mention network that shapes what topics AI associates you with. If there is a gap between the topics you want to be cited for and the topics you are actually associated with in AI responses, that is an entity gap, and it is fixable through deliberate content and PR strategy.

Visible author credentials, last-updated dates, cited sources, and schema markup for FAQ, Article, and Organization all reinforce the trust signals that LLMs use to evaluate whether a source is safe to cite. E-E-A-T optimization is not just a Google concept; it is the foundational credibility framework for LLM citation selection.

Step 5: Optimize for novel training data sources

LLMs are trained on data sources that traditional SEO never prioritized. GitHub, Wikipedia, research papers on arXiv and PubMed, patents, and books all form part of the training corpus that shapes what LLMs know and who they associate with specific topics. If your brand is relevant to any of these sources, ensuring your information is accurate and consistently represented there is a low-effort, high-impact action.

Original research is the highest-value content type in this context. When you produce a study, survey, or proprietary dataset that gets cited by other publications, your brand becomes embedded in the source chain that LLMs draw from. Distributing content to a wide range of publications can increase AI citations by up to 325% compared to only publishing on your own site. Research gets distributed. Distribution builds citations. Citations build LLM visibility.

Step 6: Monitor hallucinated URLs and fix them

LLMs frequently cite URLs that do not exist. They infer what a page should be called based on your content and brand, and sometimes get it wrong, sending users to 404 pages. AI assistants send visitors to 404 pages 2.87 times more often than Google Search, according to Ahrefs' analysis of 16 million URLs. Every 404 a user hits from an LLM citation is a lost conversion.

Monitor your server logs and Google Analytics 4 for pages that receive referral traffic from AI platforms (chatgpt.com, perplexity.ai, claude.ai) but return 404 errors. Identify the most common hallucinated URLs and set up 301 redirects to the closest matching real page. This is one of the fastest-impact optimizations available for sites that already have some LLM traffic.

Content formats and page types LLMs prefer to cite

Not all content is equally likely to be cited by LLMs. Analysis of hundreds of millions of AI citations reveals clear patterns in which page types and content formats appear most frequently.

Blog posts and comprehensive guides are the most commonly cited content types. Comparison content pages titled "best", "top", or "X vs Y" appears heavily because LLMs frequently need to present multiple options to users. Original research and data pages are cited disproportionately because AI systems seek evidence to back their claims. Core website pages (about, contact, product) also appear because they establish the entity and brand identity. PDF documents and video content are cited as well, though less frequently in pure LLM responses.

Content format | Why LLMs prefer it | Optimization priority |

Comprehensive guides and tutorials | Covers a topic completely, reducing the need to cite multiple sources | Highly ensure answer-first structure and full topic coverage |

Comparison and listicle pages ('best X') | Provides multiple options in one extractable format | High includes structured comparison tables, keep rankings explicit |

Original research and data | Provides unique evidence that LLMs cannot find elsewhere, and must be cited if used | Highest publish and distribute original studies aggressively |

FAQ pages | Maps directly to question-format queries, highly extractable | Highly include the FAQ schema markup and place the FAQ blocks on every guide |

About and product pages | Establishes entity and brand identity clearly | Medium optimize for entity clarity and accurate brand description |

How-to content with numbered steps | Sequential structure is clearly extractable by AI | Medium uses numbered lists, keep steps self-contained |

How to measure LLM visibility for your brand

Measuring LLM visibility requires a combination of direct testing, referral traffic monitoring, and dedicated tracking tools. Standard analytics platforms were not built for this; they attribute LLM-referred sessions to direct or referral traffic without distinguishing them as AI-sourced.

Manual query testing

The most direct method is running a monthly manual query log. Select fifteen to twenty queries central to your brand's topic area. Run each through ChatGPT, Perplexity, and Claude with web search enabled. Record when and how your brand appears, which competitors are cited alongside you, and which of your URLs are referenced. Track this log month over month and correlate changes with content updates or off-site activities you have undertaken.

Referral traffic monitoring in GA4

In Google Analytics 4, filter referral sessions by source domain. The key domains to track are chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, and copilot.microsoft.com. Create a custom segment for these sources and monitor volume, engagement rate, pages per session, and conversion rate separately from other traffic. Even small LLM referral volumes at high conversion rates indicate the channel is producing real business value.

Dedicated LLM visibility tools

Purpose-built tools for LLM visibility tracking have emerged to automate what manual testing can only sample. These platforms monitor brand mentions, citation rates, and competitive positioning across major AI platforms at scale:

Tool | What it tracks |

Serplock | A platform for growth and marketing teams to see how AI models perceive your brand, understand where you stand against competitors, and optimize and generate unique content to earn more mentions and build topical authority. |

Ahrefs Brand Radar | Brand citation frequency across ChatGPT, Perplexity, Gemini, and AI Overviews with competitor benchmarking and cited domain analysis |

LLMrefs | Keyword-level visibility tracking across ChatGPT, Perplexity, Claude, Gemini, and AI Overviews with geo-targeting and fan-out prompt analysis |

Semrush AI Overview Tracker | Monitors brand appearances in Google AI Overviews with position and frequency tracking over time |

Superprompt.com | Cross-model AI rank tracking by keyword across ChatGPT, Claude, and Perplexity |

Google Analytics 4 | Referral traffic from AI platforms tracked at the session level requires manual segment creation for LLM sources |

Common LLM visibility mistakes to avoid

Mistake | Why it hurts | Fix |

Blocking AI crawlers broadly in robots.txt | Removes your content from AI search indexes entirely; no content optimization can compensate | Audit each bot individually: allow retrieval bots, evaluate training bots separately |

Treating LLM visibility as identical to SEO | Different signals drive citation selection optimizing only for rankings, missing entity and off-site factors | Build an LLM-specific strategy layer above your SEO foundation |

Ignoring off-site presence | Brand mentions are the strongest citation signal a site-only strategy misses the majority of the optimization opportunity | Invest in digital PR, review platform presence, Reddit/Quora participation, and YouTube |

Publishing AI-generated spam at scale | Google filters it; LLMs are developing the same filtering. Spammy content earns no trust and no citations | Invest in original research, expert-authored content, and depth over volume |

Not monitoring hallucinated URLs | AI platforms send users to 404 pages 2.87x more than Google does; each is a lost conversion | Check GA4 referral traffic from AI platforms against your 404 logs monthly |

Measuring only traffic volume | LLM traffic is small in volume, but high in intent volume metrics alone undersell the channel's value | Track conversion rate separately for LLM-referred sessions alongside citation rate |

Optimizing for one platform only | Only 11% of domains are cited by both ChatGPT and Perplexity; each platform has distinct source preferences | Build cross-platform visibility across ChatGPT, Perplexity, Claude, and Gemini |

Conclusion

LLM visibility is not a future concern. It is an active, growing channel that is already influencing how users discover brands, form opinions, and make purchasing decisions. The infrastructure is in place. The user behaviour is established. The question is no longer whether to optimize for it it is how quickly you can build the citation footprint that secures your presence in AI-generated answers before your competitors do.

LLM Visibility Lab exists to give you the frameworks, tools, and guides to build this stack from the technical configuration that lets AI crawlers access your content, to the content strategy that makes your pages worth citing, to the measurement systems that tell you whether it is working. Start with the guides linked above and treat LLM visibility as what it is: the next essential layer of digital presence for any brand that wants to remain visible in 2026 and beyond.